After ChatGPT came out, I was surprised. On LinkedIn and other social media platforms, AI Grading was almost immediately identified as a use case.

People kept shouting: bots could grade our students. It seemed to be a popular idea. But AI grading sounded so weird to me.

Cut to April 2024.

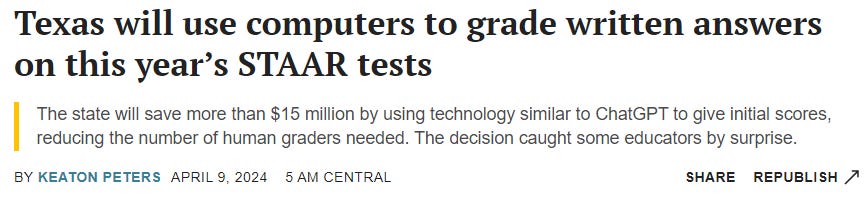

Texas said goodbye to many human graders for its STAAR tests. Hello, AI Graders!

What does that mean for the future of assessment?

Let’s Be Honest: Grading Standardized Tests Sucks

I was a trained GRE (Graduate Record Examination) tutor.

I remember the training very well. My instructor—who was seasoned, but not cynical—asked us how long we should take to grade a GRE essay.

10 minutes? Nope.

5 minutes? Nope.

3 minutes? Nope

1 minutes? Nope

His answer: 30-45 seconds. That’s it.

Now, you cannot read much of anything in 30-45 seconds. You can only skim. And you can’t even skim well. You need to do a skimmy skim skim.

Just eyeball it. That’s all you have time for.

I mention this because I fear that we’re about to enter a “grass is always greener scenario.” The move towards AI Graders, if that is the move, with likely make us nostalgic for the bygone days when humans graded standardized tests.

We’ll have to resist that temptation.

Grading standardized tests sucks. Or at least, it sucks for a lot of people.

I think that’s important. Because if we’re going to think about AI graders, we need to do it practically. That means not romanticizing human graders.

That’s easier said than done. As soon as something is offloaded to AI, our “in my day, actual people did ________” impulse.

The context is important. The system already sucked. We’re essentially arguing over whether we’re replacing a sucky system with a cheaper, but still sucky, system.

Are We Ready for AI Graders?

I think other states will follow suit.

Not only that, but I think that educational institutions (including colleges and universities) will also be emboldened to dive into AI grading. In a recent Inside Higher Ed article, José Antonio Bowen and C. Edward Watson point to AI grading is one of the technology’s big benefits. Teachers can use AI to grade an assignment based on a rubric and to write a comment in the teacher’s own voice.

I guess.

But do students want that? Do instructors want that?

From an instructor’s perspective, I feel a little uneasy about the whole thing.

I find reading my students’ work valuable (I won’t say “like”) because it gives me information how they think and see the world. I use that information to:

Plan future lessons.

Connect with my students.

Figure out whether to intervene.

I’m a little worried about what is lost when teachers hand something over to an AI grader.

I’m worried not only because the teacher loses valuable information, but because we’ll need specific, concrete ways to track the AI program’s algorithmic bias. (Consider, for example, when Amazon automated some hiring decisions and then found out that the AI program was only hiring men.) Are we ready to track that kind of bias on a large scale, in real-time?

Sure, there will be some human oversight.

But do we really know how much oversight we’re talking about? And are districts and states ready to step in immediately if, for example, it turns out that AI is making biased decisions?

I’m not sure.

Remaining Questions

I have more questions than answers.

What do students think?

What do the parents think?

Will this actually save money?

Is anyone thinking about privacy?

How will bias be tracked and mitigated?

What model are they using to grade the exams?

Who is in charge of prompting these AI systems?

I think there are a lot of questions here.

Whether this use of AI graders is good will, largely, depend on the answers to these questions.

What The Texas Decision Tells Us

My main takeaway may be surprising.

It’s this. The powers that be won’t use AI as a chance to rethink education and learning. They’ll use it to make the older, profitable ways of doing things—no matter how poorly they worked—faster and cheaper.

I mean, did they talk about getting rid of the standardized test altogether. I doubt it.

We often tell ourselves that this technology will democratize education. We’ll be able to learn what we want from anywhere. And maybe it will.

But that narrative doesn’t do justice to how complex educational systems are. We’re going to see a lot of AI-enabled bad pedagogy before we see meaningful, more progressive change.

I mean, to this day, Learning Management Systems are using AI to bolster bad pedagogy. Instructors can use AI to make quizzes faster, to track student’s work (and emotions!) more closely, and to churn out lesson plans faster.

But are we really taking steps to make education better with AI?

Sometimes, I think the answer is yes.

Sometimes, like today, I feel like we’re using AI to shore up a bad system.

We’re Still in the “Keeping Up Appearances” Phase

There’s a common misconception about Galileo and the “discovery” that the Sun—and not the Earth—is the center of the Milky Way Galaxy.

According to public imagination, Galileo and a bunch of other scientists used math to out that the Sun is the center of the galaxy and—bam!—people went through a major paradigm shift.

That’s not true.

In fact, for the next hundred years or so, scientists went back to their charts of the universe and redrew them. They tried to make the math work so that Galileo’s numbers and calculations were right, but that (thank God!) the Earth is still the center of the galaxy.

It’s called “keeping up appearances.” Thomas Kuhn writes about it in his The Structure of Scientific Revolutions.

If AI will force a paradigm shift in education, then there’s a good chance we’re in the “Keeping Up Appearances” part of the process.

The powers that be would rather spend time and money using AI to shore up exams (yay tests), rather than to revisit the entire system.

Old habits die hard.

Some Things I Learned This Week

You can use ChatGPT Plus as on-the-spot tech support: Having a tech problem? Try taking a screenshot and having ChatGPT fix it!

Darren Coxon shared (on LinkedIn) a full breakdown on how to create educational chatbots.

The video shorts supposedly created by Sora actually had a lot of human-performed editing: That seems tricky to me, OpenAI.

There’s an AI tool for creating accessible descriptions of images.

Jason, I’ve taken part in writing assessment sessions evaluating student-written essays in one of six scoring regions in the state of California using a statewide direct writing assessment strategy with a thorough training session, on-the-spot moderation for recalibrating drifting scorers and mediating greater than one point splits, and qualitative reports back to districts. It was incredible. I’ve also helped design scored middle school portfolios produced in classrooms across 19 states for a multi year project that provided proof of concept for large-scale portfolios. I worked on PACT, a state level assessment consortium that developed a way to use portfolios in teacher prep programs to assess capacity to teach writing. You are generalizing from a narrow slice of experience. I’ve worked with ETS on multiple-choice and constructed response measures. They are pretty good for quick and dirty but indefensible in contrast with NSP, PACT, CAP, Kentucky, Vermont, and all of the other projects. There is a deep body of research with a decided refusal to make use of it. Without defending it in this comment, let me say this: I believe using AI to evaluate student writing is a categorical mistake of immense proportions. And I would never, ever, ever use Texas as a model for assessment unless you want the simplest plain vanilla cut corners ideological measure available. My experience with Texas assessment is extensive.